Not sure if it is optimal to base the efficiency of flagging process only to human intelligence (survey). Why not instead train human intelligence to find inappropriate behaviour?

From seeing pipeline in action, there is a lot of cherry-picking. Especially in regards to favouring existing grants. Here is my data analysis post: Lifetime Gitcoin Grants Data Analysis and Hypothesis Testing - #2 by Pfed-prog

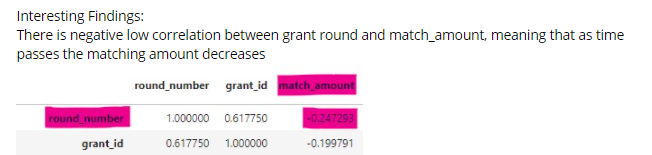

The core issue is the scalability. There is disproportionate number of new grants on the platform. Hence, the strong positive correlation between round_number and grant_id.

However, there is definitely a missing piece of analysis to what extent new grants are actually new users and not old users evolving to cheat the system.