I think there is so much potential in investing data science at Gitcoin.

This is informed by a few things

- Prior Experience. A few years ago, I was the Director of Engineering at a clean energy startup that set up a fairly robust data warehouse ETL/snowflake schema system to run advanced analytics on time series data. I’ve also been in a few different product oriented positions at various web2 startups that had mission critical ecommerce checkout flows with A/B testing, marketing emails to optimize those funnels. This one time, when i was CTO of an online dating site (a double sided marketplace, just like Gitcoin), I built a matching engine that matched users on 20 dimensions.

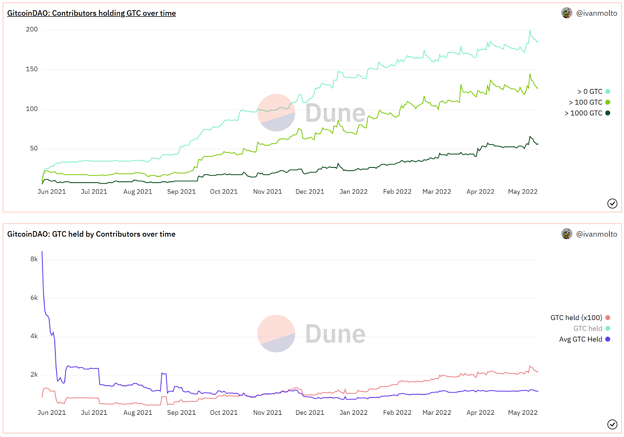

- Per gitcoin.co/results, Gitcoin has helped 66,712 funders reach an audience of 292,817 earners. Gitcoin has facilitated 1,740,075 complete transactions to 10,247 unique earners. Understanding the 4 years of data at Gitcoin, particularly the Grants Rounds data, gives me a hunch that there are interesting opportunities in understanding the data.

The objective of this thread is to start a conversation. What should the data science practice at Gitcoin look like?

Here are the data science opportunities matters I’m aware of at GitcoinDAO

-

Product Analytics & Data Science

- Responsible for understanding how users use the platfrom.

-

Marketing Analytics & Data Science

- Responsible for understanding how to drive more core actions (like Grants checkouts)

-

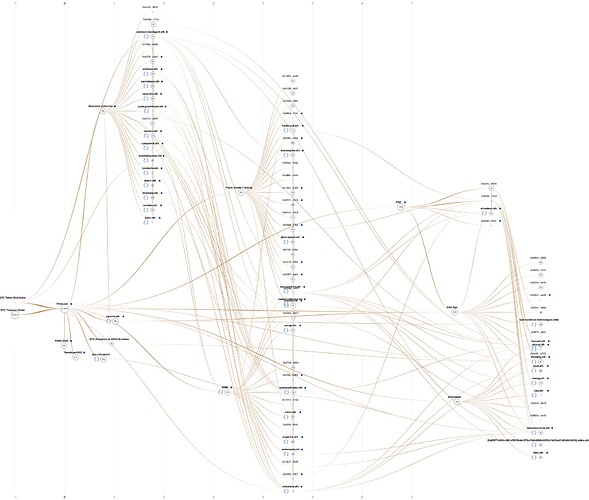

Complex Systems Insights

- Responsible for guiding the QF matching engine with deep analytical insights (perhaps one day even simulating agent-based contributor behaviour)

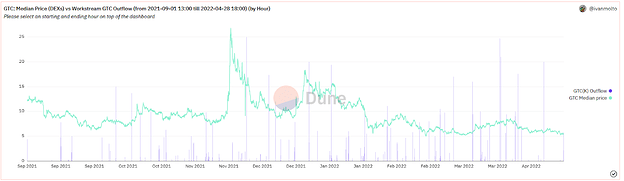

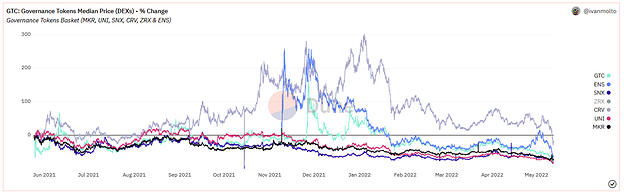

- Responsible for publishing advanced analytics-based insights from our datasets. Heres an example of what this could look like.

-

Fraud insights

- Responsible for (Joe, correct me if I’m wrong) surfacing fraud on the Gitcoin Grants network (whether sybil or collusion) and partnering with Governance to remediate in a legitimate way.

An assortment of tools are used in these practices at Gitcoin. Here are the ones I’m aware of:

- Etherscan

- Dune Analytics

- The Graph

- PostgresSQL

- Google Analytics

- Metabase

- Google spreadsheets

- Google presentations

- Acquia

- CADCAD

- Machine Learning Tools (not sure which ones)

I’d welcome corrections from any workstream leads on the above. The above is just my best approximation of the tools/roles as they currently stand int he DAO.

I’d be curious if people in the community would be interested in putting forward a proposal to the DAO to formalize a data science practice at GitcoinDAO (which currently resides in multiple different groups at varying levels of coordination)

I’d like to end on this questions:

- What should the data science practice at Gitcoin look like?

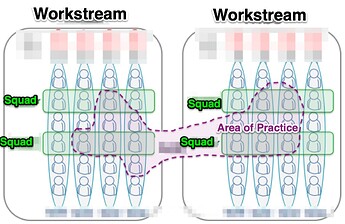

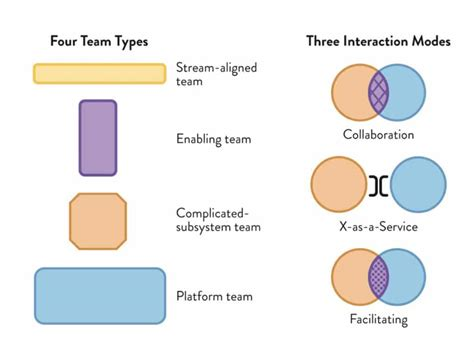

- If Data Science was an area of practice at Gitcoin, what would it look like?

- How could it span multiple workstreams or squads(teams) & cross-pollinate between them?