Hey all, GG19 program round matching results are live here! We’ll have five days for review and feedback, then process payouts on 12/20.

Thank you to @connor for co-authoring this post with me. Thank you to @Joel_m, @ghostffcode, & @stefi_says for contributing to the post-round analysis results. Thank you to @M0nkeyFl0wer @Sov and others within the DAO for their thoughts and reviews.

TL;DR

In GG19, we continue moving to a variant of QF that uses clustering to move sybil and collusion resistance natively inside the mechanism and reward projects with more diverse and pluralistic communities. GG19 will be the first round in years where we will not do any closed-source silencing of Sybils/donors. Instead, we’re solely relying on our mechanism and Gitcoin Passport.

For this round, we had a proactive governance discussion and a subsequent snapshot vote to approve the transfer of matching funds. Consequently, there will not be a formal vote to ratify these results; however, we will have five days for review and discussion on the forums.

GG19 Overview

GG19 took a few steps to evolve our program round strategy from prior rounds. This time we had 3 program rounds distribute $1,094,662 to 471 projects. Big thank you to the Dev Con team, Polygon, & Arbitrum for supporting Ethereum Infrastructure, Open Source Software, and Web3 Community Builders! ![]()

![]()

![]()

We also had an amazing 9 community rounds and 9 independent rounds running at the same time! This broke a Gitcoin record for most concurrent rounds. Special gratitude goes to all our partners and especially to our community round runners at the Climate Coordination Network, Arbitrum Citizens, Metagov (Governance Research), Token Engineering Commons, OpenCivics, Mask Network (Web3 Social), Meta Pool, and 1inch.

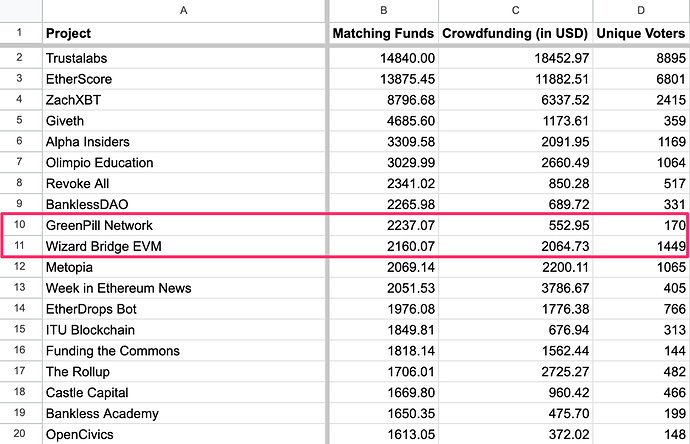

| Round | Matching Pool | Matching Cap | Crowdfund |

|---|---|---|---|

| Open Source Software | $200,000.00 | 7.420% | $297,252 |

| Ethereum Infrastructure | $200,000.00 | 10% | $58,723 |

| Web3 Community & Education | $200,000.00 | 7.420% | $138,687 |

Each Gitcoin round sees improvements over the last but this one feels like a turning point in many ways. Some of the new features and additions include:

-

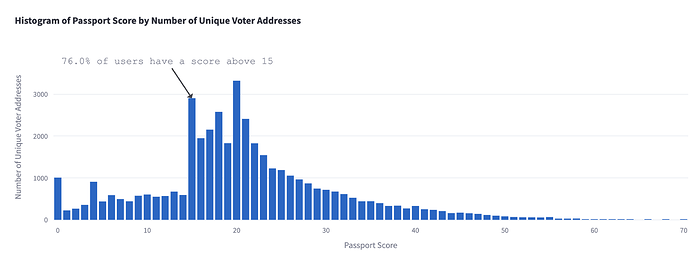

Passport Sliding Scale: Rather than having passport scores resolve to a binary “pass” or “fail” result to determine whether a donor gets matched, GG19 had a new feature where once scores were over a certain threshold(15), an increase in the score would result in an increased matching impact. 76.0% of wallets qualified for this round, an increase of 4% from last round.

-

Matching Estimates: Donors could now see an estimate of their donation’s impact on a project’s matching amount.

-

Explorer Landing Page: explorer.gitcoin.co’s beautiful redesign also improved search and sort functionality

-

Collections: created a way for donors to delegate funding decisions

-

Report Cards: provided round operators a new channel to communicate publicly about their round

-

Passport UI Improvements: sleek new interface makes it easier to see how you can earn points to increase your unique humanity score.

-

Passport Scoring Improvements: continuous adjustments to the stamp scoring models are adapting to sybil strategies, making it harder for them and easier for real humans

Read more here:

- GG19 OSS Round Review: Reflections

- GG19 Web3 Community and Education Round Review: Reflections

- GG19 Eth Infra Round Review: Reflections

Kudos to everyone who worked hard to make this round a success! There are many people behind the scenes at Gitcoin whose work makes it possible to fund what matters. Thank you!!

Round and Results Calculation Details

The complete list of final results & payout amounts can be found here. Below, we’ll cover how these results were calculated and other decisions.

Post-round mechanism selection had a $350k financial impact. This means $175k was reduced from projects that saw over-coordinated or sybil activity and given to other projects.

Next Gen Quadratic Funding: Collusion-Resistance Inside The Mechanism

We introduced post-round sybil squelching a few years ago as part of our defense against the dark arts of sybil attackers and airdrop farmers. This process involves the Gitcoin team utilizing on and off-chain data, machine learning, and manual verification to find sybils and sockpuppet accounts to take them out of the matching distribution. Because our methods only worked so long as the attackers didn’t know how we found them, we had to be closed source. This round, with an improved mechanism, we found our closed-source solution only improved results by between 5 and 20%. That’s why we’re really glad to not use it at all. Instead, we’ll draw attention to the open source code we use to calculate quadratic funding results.

About a year ago, @joel_m, @GlenWeyl, and @erich published an innovative paper in which they designed collusion-resistant methods for quadratic funding. Recently we began implementing their strategies and they’ve proven highly effective. We successfully reduced the match of the most suspicious projects by up to 85% and redirected those funds to other projects.

Quadratic funding helps us solve coordination failures by creating a way to allocate towards the projects a community believes should be funded. As a base case it assumes people are making independent decisions. However, this assumption can be exploited by colluding groups who align their funding choices to unfairly influence the distribution of matching funds.

Collusion-oriented cluster-matching (COCM) doesn’t make this assumption. Instead, it quantifies just how coordinated groups of donors are likely to be based on the social signals they have in common. Projects backed by more independent agents receive greater matching funds. Conversely, if a project’s support network shows higher levels of coordination, the matching funds are reduced, encouraging self-organized solutions within more coordinated groups.

One open area of research examines what data points make the best social signals. For this round we used the donation choices themselves as those signals. We also studied alternative options such as using passport stamp data and POAP data. If you’re interested in conversations on clustering data or mechanism developments, please join this telegram group.

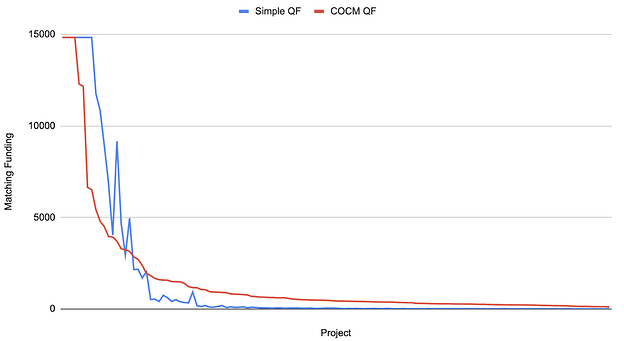

In addition, as an unintended side-effect of the COCM mechanism most projects get more funding. As an example, here is the chart of per-project funding for the Web3 Open Source Software round:

We’re directing more of the funding to the long-tail of projects.

For more details about pluralistic QF methods, check out this paper and/or these podcasts.

Code of Conduct

As a reminder to all projects, quid pro quo is explicitly against our agreement. Providing an incentive or reward for individuals to donate to specific projects can affect your ability to participate in future rounds. If you see someone engaging in this type of behavior, please let us know.

Coordination Technology == Social Technology

For us to fund what matters, we need to use our collective voices. Let’s chat below on these results, the mechanism choice, and your experiences in the round. What did you see working well? What could be improved?

Next Steps

We plan to distribute matches before the holidays, by the end of next week. We are leaving 5 days of discussion on this post, and sans any major problems or issues found with these results, will process payouts shortly thereafter. But that doesn’t mean this conversation ends there! We want the conversation to continue to help us shape strategies and improvements for future rounds. Cheers!